In the complex world of SEO, there’s a powerful yet often overlooked file working behind the scenes to control how search engines interact with your website—this file is the robots.txt. Though small in size, the robots.txt file plays a significant role in shaping your website’s visibility and ranking on search engine results pages (SERPs). But what exactly is this file, and why is it so essential for SEO? Let’s dive into the details.

What is Robots.txt?

The robots.txt file is a simple text file that lives in the root directory of your website. Its main function is to give instructions to web crawlers (also known as bots), such as Googlebot, on how they should crawl and index your site’s content. Think of it as a gatekeeper that decides which parts of your website can be accessed by search engines and which should remain hidden.

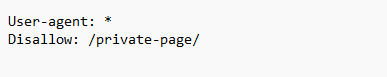

Here’s what a typical robots.txt file might look like:

Image 1 showcase the example of robots.txt

In this example:

- User-agent specifies which bot the rule applies to (an asterisk (*) represents all bots).

- Disallow prevents bots from accessing certain directories or pages—in this case, “/private-page/.”

Why Does Robots.txt Matter for SEO?

Now that you understand the basic function of the robots.txt file, let’s explore why it matters to your SEO strategy.

1. Control Over Search Engine Crawling

While it may sound beneficial to have every part of your site indexed by search engines, not all pages contribute positively to your SEO. For example, pages like admin portals, duplicate content, or unfinished content can dilute your search engine rankings. By using robots.txt, you can instruct crawlers to skip these pages, allowing them to focus on indexing the pages that are more important for ranking.

2. Maximize Crawl Budget

Every website has a crawl budget, which is the number of pages a search engine’s crawler will scan and index in a given period. For large websites, ensuring that search engines focus on high-priority pages is crucial. By using the robots.txt file to exclude non-essential pages, you can help search engines use your crawl budget more efficiently, improving the chances of important pages being crawled and indexed regularly.

3. Improving Load Speed and User Experience

Some parts of your website, like heavy media files or complex scripts, may slow down the crawler, which can negatively impact both your SEO and user experience. By disallowing these elements through robots.txt, you ensure that the search engines focus only on the critical content that helps your SEO, speeding up both crawling and overall website performance.

4. Preventing Indexing of Duplicate Content

Duplicate content can be an SEO nightmare. Pages with similar content, such as printer-friendly versions of a webpage or session-specific URLs, can confuse search engines and lead to ranking penalties. The robots.txt file can be used to block these duplicate pages from being indexed, keeping your SEO in top shape.

5. Enhancing Security and Privacy

Sometimes, websites contain sensitive or private information that you don’t want accessible to the public or indexed by search engines. Although robots.txt is not a security measure in itself, it can serve as a directive for search engines to avoid crawling sections like login portals or administrative areas, ensuring they don’t show up in SERPs.

Key Robots.txt Directives: A Quick Overview

Here’s a comparison of common robots.txt directives and their functions:

| Directive | Function | Example |

| User-Agent | Specifies which bots the rules apply to | User-agent: * (applies to all bots) |

| Disallow | Blocks bots from crawling specific pages or directories | Disallow: /private-page/ |

| Allow | Lets certain pages be crawled even in a disallowed directory | Allow: /public-page/ |

| Sitemap | Points bots to the website’s sitemap for better indexing | Sitemap: https://example.com/sitemap.xml |

| Crawl-Delay | Slows down the rate at which bots crawl your site | Crawl-delay: 10 (10-second delay) |

Table 1 showcase the common robots.txt directives and their functions

Common Mistakes to Avoid

While robots.txt can significantly boost your SEO, a few common mistakes could backfire.

- Blocking important pages: Accidentally disallowing crucial pages or entire sections of your website can prevent them from being indexed, resulting in a drop in search rankings.

- Over-reliance on robots.txt for security: While the file can block crawlers, it doesn’t secure your data. Search engines may still list disallowed URLs if other sites link to them.

- Incorrect syntax: A single mistake in the file, such as incorrect syntax, can lead to miscommunication with bots, causing them to ignore your instructions.

Best Practices for Using Robots.txt in SEO

Review crawl reports regularly: Use tools like Google Search Console to monitor how your website is being crawled and to check if your robots.txt directives are being followed correctly.

- Test your robots.txt: Before implementing your robots.txt file, use tools like Google’s robots.txt Tester to ensure there are no errors that could harm your SEO.

- Be selective: Avoid blocking pages that contain valuable content for SEO purposes, like product pages, blog posts, or landing pages.

In conclucion, the robots.txt file is more than just a technical SEO tool—it’s a strategic asset that can influence how search engines crawl and index your website. By controlling which pages search engines access, maximizing your crawl budget, and protecting sensitive areas of your site, you can improve your SEO performance and enhance user experience. Just be sure to configure it correctly, as small errors in this tiny file can have big consequences for your website’s visibility on search engines.

Curious About SEO? Contact Us Now for a Free Website Audit!